Neural Networks Overview

Today, neural networks are used to solve a wide variety of problems, some of which have been solved by existing statistical methods, and some of which have not. These applications fall into one of the following three categories:

Forecasting: predicting one or more quantitative outcomes from both quantitative and nominal input data.

Classification: classifying input data into one of two or more categories.

Statistical pattern recognition: uncovering patterns, typically spatial or temporal, among a set of variables.

Forecasting, pattern recognition and classification problems are not new. They existed years before the discovery of neural network solutions in the 1980’s. What is new is that neural networks provide a single framework for solving so many traditional problems and, in some cases, extend the range of problems that can be solved.

Traditionally, these problems were solved using a variety of widely known statistical methods:

linear regression and general least squares

logistic regression and discrimination

principal component analysis

discriminant analysis

k

k-nearest neighbor classification

ARMA and NARMA time series forecasts

In many cases, simple neural network configurations yield the same solution as many traditional statistical applications. For example, a single-layer, feedforward neural network with linear activation for its output perceptron is equivalent to a general linear regression fit. Neural networks can provide more accurate and robust solutions for problems where traditional methods do not completely apply.

Mandic and Chambers (2001) identify the traditional methods for time series forecasting that are unsuitable when a time series:

is non-stationary

has large amounts of noise, such as a biomedical series

is too short

ARIMA and other traditional time series approaches can produce poor forecasts when one or more of the above conditions exist. The forecasts of ARMA and non-linear ARMA (NARMA) depend heavily upon key assumptions about the model or underlying relationship between the output of the series and its patterns.

Neural networks, on the other hand, adapt to changes in a non-stationary series and can produce reliable forecasts even when the series contains a good deal of noise or when only a short series is available for training. Neural networks provide a single tool for solving many problems traditionally solved using a wide variety of statistical tools and for solving problems when traditional methods fail to provide an acceptable solution.

Although neural network solutions to forecasting, pattern recognition and classification problems can vary vastly, they are always the result of computations that proceed from the network inputs to the network outputs. The network inputs are referred to as patterns, and outputs are referred to as classes. Frequently the flow of these computations is in one direction, from the network input patterns to its outputs. Networks with forward-only flow are referred to as feedforward networks.

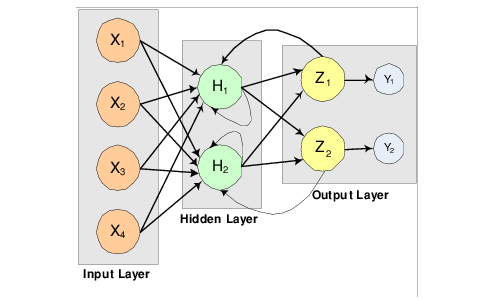

Other networks, such as recurrent neural networks, allow data and information to flow in both directions, see Mandic and Chambers (2001).

A neural network is defined not only by its architecture and flow, or interconnections, but also by computations used to transmit information from one node or input to another node. These computations are determined by network weights. The process of fitting a network to existing data to determine these weights is referred to as

training the network, and the data used in this process are referred to as

patterns. Individual network inputs are referred to as

attributes and outputs are referred to as

classes.

Neural Network and Common Statistical Terminology Synonyms lists terms used to describe neural networks that are synonymous to common statistical terminology.

Neural Networks – History and Terminology

The Threshold Neuron

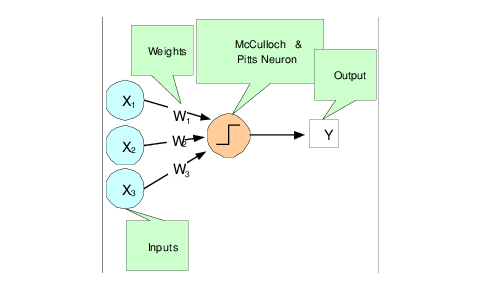

McCulloch and Pitts (1943) wrote one of the first published works on neural networks. This paper describes the threshold neuron as a model for which the human brain stores and processes information.

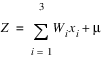

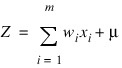

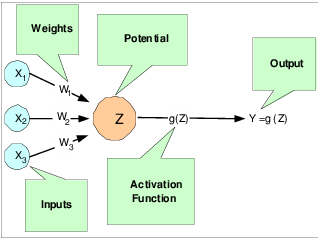

All inputs to a threshold neuron are combined into a single number, Z, using the following weighted sum:

where m is the number of inputs and wi is the weight associated with the ith input (attribute) xi. The term μ in this calculation is referred to as the bias term. In traditional statistical terminology it might be referred to as the intercept. The weights and bias terms in this calculation are estimated during network training.

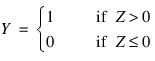

In McCulloch and Pitts’ (1943) description of the threshold neuron, the neuron does not respond to its inputs unless Z is greater than zero. If Z is greater than zero then the output from this neuron is set to 1. If Z is less than or equal to zero the output is zero:

where Y is the neuron’s output.

Years following McCulloch and Pitts’ (1943) article, interest in McCulloch and Pitts neural network was limited to theoretical discussions, such as Hebb (1949), which describe learning, memory and the brain’s structure.

The Perceptron

The McCulloch and Pitts’ neuron is also referred to as a threshold neuron since it abruptly changes its output from 0 to 1 when its potential, Z, crosses a threshold. Mathematically, this behavior can be viewed as a step function that maps the neuron’s potential, Z, to the neuron’s output, Y.

Rosenblatt (1958) extended the McCulloch and Pitts threshold neuron by replacing this step function with a continuous function that maps Z to Y. The Rosenblatt neuron is referred to as the perceptron, and the continuous function mapping Z to Y makes it easier to train a network of perceptrons than a network of threshold neurons.

Unlike the threshold neuron, the perceptron produces analog output rather than the threshold neuron’s purely binary output. Carefully selecting the analog function, makes Rosenblatt’s perceptron differentiable, whereas the threshold neuron is not. This simplifies the training algorithm.

Like the threshold neuron, Rosenblatt’s perceptron starts by calculating a weighted sum of its inputs:

This is referred to as the perceptron’s potential.

Rosenblatt’s perceptron calculates its analog output from its potential. There are many choices for this calculation. The function used for this calculation is referred to as the activation function as shown in

Figure 14-4: A Neural Net Perceptron on page 978.

As shown in

A Neural Net Perceptron, perceptrons consist of the following five components:

1. Inputs: x1, x2, and x3

2. Input Weights: W1, W2, and W3

3. Potential:

, where

μ is a bias correction

4. Activation Function: g(Z)

5. Output: Y = g(Z)

Like threshold neurons, perceptron inputs can be either the initial raw data inputs or the output from another perceptron. The primary purpose of network training is to estimate the weights associated with each perceptron’s potential. The activation function maps this potential to the perceptron’s output.

The Activation Function

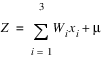

Although theoretically any differentiable function can be used as an activation function, the identity and sigmoid functions are the two most commonly used.

The identity activation function, also referred to as a linear activation function, is a flow-through mapping of the perceptron’s potential to its output:

g(Z) = Z

Output perceptrons in a forecasting network often use the identity activation function shown in

An Identity (Linear) Activation Function.

If the identity activation function is used throughout the network, then it is easily shown that the network is equivalent to fitting a linear regression model of the form Yi = β0 + β1x1 + ... + βkxk, where x1, x2, ..., xk are the k network inputs, Yi is the ith network output and β0, β1, ..., βk are the coefficients in the regression equation. As a result, it is uncommon to find a neural network with identity activation used in all its perceptrons.

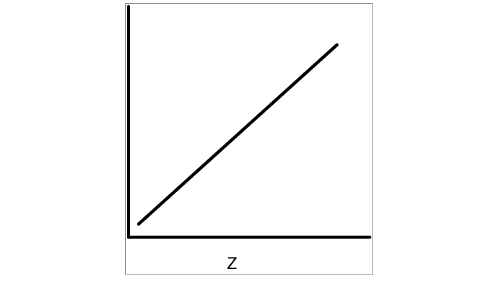

Sigmoid activation functions are differentiable functions that map the perceptron’s potential to a range of values, such as 0 to 1, i.e.,

ℜK → ℜ where

K is the number of perceptron inputs.

Sigmoid Activation Function shows an example of a Sigmoid activation function.

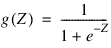

In practice, the most common sigmoid activation function is the logistic function that maps the potential into the range 0 to 1:

Since 0 < g(Z) < 1, the logistic function is very popular for use in networks that output probabilities.

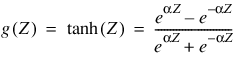

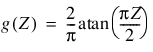

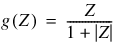

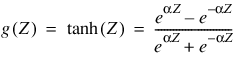

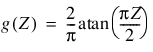

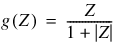

Other popular sigmoid activation functions include:

the hyperbolic-tangent

the arc-tangent

the squash activation function, see Elliott (1993),

It is easy to show that the hyperbolic-tangent and logistic activation functions are linearly related. Consequently, forecasts produced using logistic activation should be close to those produced using hyperbolic-tangent activation. However, one function may be preferred over the other when training performance is a concern. Researchers report that the training time using the hyperbolic-tangent activation function is shorter than using the logistic activation function.

Network Applications

Forecasting using Neural Networks

There are numerous good statistical forecasting tools. Most require assumptions about the relationship between the variables being forecasted and the variables used to produce the forecast, as well as the distribution of forecast errors. Such statistical tools are referred to as parametric methods. ARIMA time series models, for example, assume that the time series is stationary, that the errors in the forecasts follow a particular ARIMA model, and that the probability distribution for the residual errors is Gaussian, see Box and Jenkins (1970). If these assumptions are invalid, then ARIMA time series forecasts can be substandard.

Neural networks, on the other hand, require few assumptions. Since neural networks can approximate highly non-linear functions, they can be applied without an extensive analysis of underlying assumptions.

Another advantage of neural networks over ARIMA modeling is the number of observations needed to produce a reliable forecast. ARIMA models generally require 50 or more equally spaced, sequential observations in time. In many cases, neural networks can also provide adequate forecasts with fewer observations by incorporating exogenous, or external, variables in the network’s input.

For example, a company applying ARIMA time series analysis to forecast business expenses would normally require each of its departments, and each sub-group within each department, to prepare its own forecast. For large corporations this can require fitting hundreds or even thousands of ARIMA models. With a neural network approach, the department and sub-group information could be incorporated into the network as exogenous variables. Although this can significantly increase the network’s training time, the result would be a single model for predicting expenses within all departments.

Linear least squares models are also popular statistical forecasting tools. These methods range from simple linear regression for predicting a single quantitative outcome to logistic regression for estimating probabilities associated with categorical outcomes. It is easy to show that simple linear least squares forecasts and logistic regression forecasts are equivalent to a feedforward network with a single layer. For this reason, single-layer feedforward networks are rarely used for forecasting. Instead multilayer networks are used.

Hutchinson (1994) and Masters (1995) describe using multilayer feedforward neural networks for forecasting. Multilayer feedforward networks are characterized by the forward-only flow of information in the network. The flow of information and computations in a feedforward network is always in one direction, mapping an M-dimensional vector of inputs to a C-dimensional vector of outputs, i.e., ℜM → ℜC where C < M.

There are many other types of networks without this feed forward requirement. Information and computations in a recurrent neural network, for example, flow in both directions. Output from one level of a recurrent neural network can be fed back, with some delay, as input into the same network (see

Figure 14-2: Recurrent neural network with 4 inputs and 2 outputs on page 975). Recurrent networks are very useful for time series prediction, see Mandic and Chambers (2001).

Pattern Recognition using Neural Networks

Neural networks are also extensively used in statistical pattern recognition. Pattern recognition applications that make wide use of neural networks include:

natural language processing: Manning and Sch

ütze (1999)

speech and text recognition: Lippmann (1989)

face recognition: Lawrence, et al. (1997)

playing backgammon, Tesauro (1990)

classifying financial news, Calvo (2001)

The interest in pattern recognition using neural networks has stimulated the development of important variations of feedforward networks. Two of the most popular are:

Self-Organizing Maps, also called Kohonen Networks, Kohonen (1995)

Radial Basis Function Networks, Bishop (1995).

Useful mathematical descriptions of the neural network methods underlying these applications are given by Bishop (1995), Ripley (1996), Mandic and Chambers (2001), and Abe (2001). From a statistical viewpoint, Warner and Misra (1996) describes an excellent overview of neural networks.

Neural Networks for Classification

Classifying observations using prior concomitant information is possibly the most popular application of neural networks. Data classification problems abound in business and research. When decisions based upon data are needed, they can often be treated as a neural network data classification problem. Decisions to buy, sell, or hold a stock are decisions involving three choices. Classifying loan applicants as good or bad credit risks, based upon their application, is a classification problem involving two choices. Neural networks are powerful tools for making decisions or choices based upon data.

These same tools are ideally suited for automatic selection or decision-making. Incoming email, for example, can be examined to separate spam from important email using a neural network trained for this task. A good overview of solving classification problems using multilayer feedforward neural networks is found in Abe (2001) and Bishop (1995).

There are two popular methods for solving data classification problems using multilayer feedforward neural networks, depending upon the number of choices (classes) in the classification problem. If the classification problem involves only two choices, then it can be solved using a neural network with a single logistic output. This output estimates the probability that the input data belong to one of the two choices.

For example, a multilayer feedforward network with a single logistic output can be used to determine whether a new customer is credit-worthy. The network’s input would consist of information on the applicants credit application, such as age, income, etc. If the network output probability is above some threshold value (such as 0.5 or higher) then the applicant’s credit application is approved. This is referred to as binary classification using a multilayer feedforward neural network.

If more than two classes are involved then a different approach is needed. A popular approach is to assign logistic output perceptrons to each class in the classification problem. The network assigns each input pattern to the class associated with the output perceptron that has the highest probability for that input pattern. However, this approach produces invalid probabilities since the sum of the individual class probabilities for each input is not equal to one, which is a requirement for any valid multivariate probability distribution.

To avoid this problem, the softmax activation function, see Bridle (1990), applied to the network outputs ensures that the outputs conform to the mathematical requirements of multivariate classification probabilities. If the classification problem has C categories, or classes, then each category is modeled by one of the network outputs. If Zi is the weighted sum of products between its weights and inputs for the ith output, i.e.:

then:

The softmax activation function ensures that all outputs conform to the requirements for multivariate probabilities. That is:

0 <

softmaxi < 1, for all i = 1, 2, ..., C

A pattern is assigned to the ith classification when softmaxi is the largest among all C classes.

However, multilayer feedforward neural networks are only one of several popular methods for solving classification problems. Others include:

Support Vector Machines (SVM Neural Networks), Abe (2001),

Classification and Regression Trees (CART), Breiman, et al. (1984),

Quinlan’s classification algorithms C4.5 and C5.0, Quinlan (1993), and

Quick, Unbiased and Efficient Statistical Trees (QUEST), Loh and Shih (1997).

Support Vector Machines are simple modifications of traditional multilayer feedforward neural networks (MLFF) configured for pattern classification.

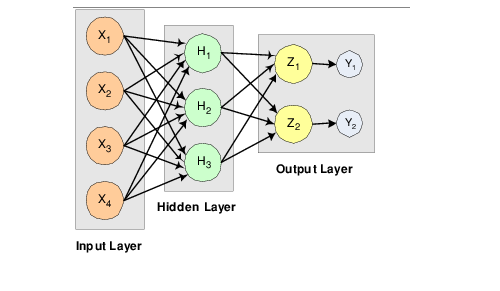

Multilayer Feedforward Neural Networks

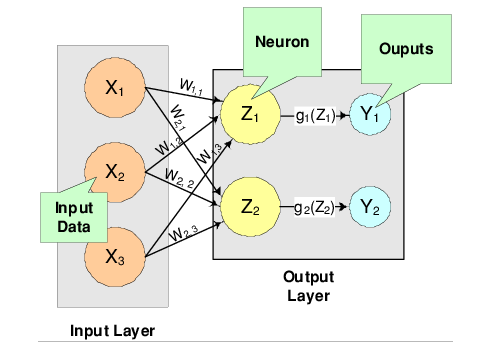

A multilayer feedforward neural network is an interconnection of perceptrons in which data and calculations flow in a single direction, from the input data to the outputs. The number of layers in a neural network is the number of layers of perceptrons. The simplest neural network is one with a single input layer and an output layer of perceptrons. The network in

A Single-Layer Feedforward Neural Net illustrates this type of network. Technically, this is referred to as a one-layer feedforward network with two outputs because the output layer is the only layer with an activation calculation.

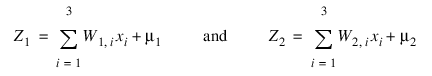

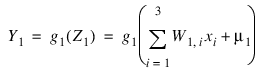

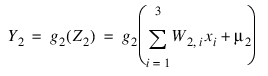

In this single-layer feedforward neural network, the network’s inputs are directly connected to the output layer perceptrons, Z1 and Z2.

The output perceptrons use activation functions, g1 and g2, to produce the outputs Y1 and Y2.

Since:

and:

When the activation functions g1 and g2 are identity activation functions, the single-layer neural network is equivalent to a linear regression model. Similarly, if g1 and g2 are logistic activation functions, then the single-layer neural network is equivalent to logistic regression. Because of this correspondence between single-layer neural networks and linear and logistic regression, single-layer neural networks are rarely used in place of linear and logistic regression.

The next most complicated neural network is one with two layers. This extra layer is referred to as a hidden layer. In general there is no restriction on the number of hidden layers. However, it has been shown mathematically that a two-layer neural network can accurately reproduce any differentiable function, provided the number of perceptrons in the hidden layer is unlimited.

However, increasing the number of perceptrons increases the number of weights that must be estimated in the network, which in turn increases the execution time for the network. Instead of increasing the number of perceptrons in the hidden layers to improve accuracy, it is sometimes better to add additional hidden layers, which typically reduce both the total number of network weights and the computational time. However, in practice, it is uncommon to see neural networks with more than two or three hidden layers.

Neural Network Error Calculations

Error Calculations for Forecasting

The error calculations used to train a neural network are very important. Researchers have investigated many error calculations in an effort to find a calculation with a short training time appropriate for the network’s application. Typically error calculations are very different depending primarily on the network’s application.

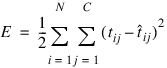

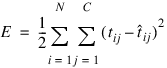

For forecasting, the most popular error function is the sum-of-squared errors, or one of its scaled versions. This is analogous to using the minimum least squares optimization criterion in linear regression. Like least squares, the sum-of-squared errors is calculated by looking at the squared difference between what the network predicts for each training pattern and the target value, or observed value, for that pattern. Formally, the equation is the same as one-half the traditional least squares error:

where

N is the total number of training cases,

C is equal to the number of network outputs,

tij is the observed output for the

ith training case and the

jth network output, and

is the network’s forecast for that case.

Common practice recommends fitting a different network for each forecast variable. That is, the recommended practice is to use C=1 when using a multilayer feedforward neural network for forecasting. For classification problems with more than two classes, it is common to associate one output with each classification category, i.e., C = number of classes.

Notice that in ordinary least squares, the sum-of-squared errors are not multiplied by one-half. Although this has no impact on the final solution, it significantly reduces the number of computations required during training.

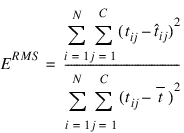

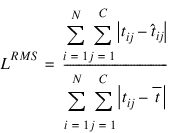

Also note that as the number of training patterns increases, the sum-of-squared errors increases. As a result, it is often useful to use the root-mean-square (RMS) error instead of the unscaled sum-of-squared errors:

where

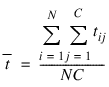

is the average output:

Unlike the unscaled sum-of-squared errors, ERMS does not increase as N increases. The smaller values for ERMS, indicate that the network predicts its training targets closer. The smallest value, ERMS = 0, indicates that the network predicts every training target exactly. The largest value, ERMS = 1, indicates that the network predicts the training targets only as well as setting each forecast equal to the mean of the training targets.

Notice that the root-mean-squared error is related to the sum-of-squared error by a simple scale factor:

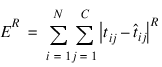

Another popular error calculation for forecasting from a neural network is the Minkowski-R error. The sum-of-squared error, E, and the root-mean-squared error, ERMS, are both theoretically motivated by assuming the noise in the target data is Gaussian. In many cases, this assumption is invalid. A generalization of the Gaussian distribution to other distributions gives the following error function, referred to as the Minkowski-R error:

Notice that ER = 2E when R = 2.

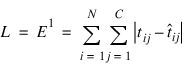

A good motivation for using ER instead of E is to reduce the impact of outliers in the training data. The usual error measures, E and ERMS, emphasize larger differences between the training data and network forecasts since they square those differences. If outliers are expected, then it is better to de-emphasize larger differences. This can be done by using the Minkowski-R error with R =1. When R=1, the Minkowski-R error simplifies to the sum of absolute differences:

L is also referred to as the Laplacian error. This name is derived from the fact that it can be theoretically justified by assuming the noise in the training data follows a Laplacian, rather than Gaussian, distribution.

Of course, similar to E, L generally increases when the number of training cases increases. Similar to ERMS, a scaled version of the Laplacian error can be calculated using the following formula:

Cross-Entropy Error for Binary Classification

As previously mentioned, multilayer feedforward neural networks can be used for both forecasting and classification applications. Training a forecasting network involves finding the network weights that minimize either the Gaussian or Laplacian distributions, E or L, respectively, or equivalently their scaled versions, ERMS or LRMS. Although these error calculations can be adapted for use in classification by setting the target classification variable to zeros and ones, this is not recommended. Use of the sum-of-squared and Laplacian error calculations is based on the assumption that the target variable is continuous. In classification applications, the target variable is a discrete random variable with C possible values, where C = number of classes.

A multilayer feedforward neural network for classifying patterns into one of only two categories is referred to as a binary classification network. It has a single output: the estimated probability that the input pattern belongs to one of the two categories. The probability that it belongs to the other category is equal to one minus this probability, i.e., P(C2) = P(not C1) = 1 – P(C1).

Binary classification applications are very common. Any problem requiring yes/no classification is a binary classification application. For example, deciding to sell or buy a stock is a binary classification problem. Deciding to approve a loan application is also a binary classification problem. Deciding whether to approve a new drug or to provide one of two medical treatments are binary classification problems.

For binary classification problems, only a single output is used, C=1. This output represents the probability that the training case should be classified as “yes.” A common choice for the activation function of the output of a binary classification network is the logistic activation function, which always results in an output in the range 0 to 1, regardless of the perceptron’s potential.

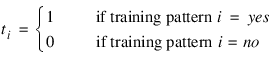

One choice for training binary classification networks is to use sum-of-squared errors with the class value of yes patterns coded as a 1 and the no classes coded as a 0, i.e.:

However, using either the sum-of-squared or Laplacian errors for training a network with these target values assumes that the noise in the training data are Gaussian. In binary classification, the zeros and ones are not Gaussian. They follow the Bernoulli distribution:

P(ti = t) = pt(1 – p)1 – t

where p is equal to the probability that a randomly selected case belongs to the “yes” class.

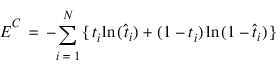

Modeling the binary classes as Bernoulli observations leads to the use of the cross-entropy error function described by Hopfield (1987) and Bishop (1995):

where

N is the number of training patterns,

ti is the target value for the

ith case (either 1 or 0), and

is the network output for the

ith training pattern. This is equal to the neural network’s estimate of the probability that the

ith training pattern should be classified as “yes.”

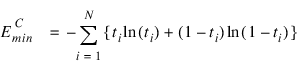

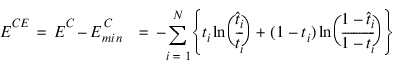

For situations in which the target variable is a probability in the range 0 < tij < 1, the value of the cross-entropy at the network’s optimum is equal to:

Subtracting

from

EC gives an error term bounded below by zero, i.e.:

ECE ≥ 0

where:

This adjusted cross-entropy, ECE, is normally reported when training a binary classification network where 0 < tij < 1. Otherwise EC, the unadjusted cross-entropy error, is used. For ECE, small values, i.e. values near zero, indicate that the training resulted in a network able to classify the training cases with a low error rate.

Cross-Entropy Error for Multiple Classes

Using a multilayer feedforward neural network for binary classification is relatively straightforward. A network for binary classification only has a single output that estimates the probability that an input pattern belongs to the “yes” class, i.e., ti = 1. In classification problems with more than two mutually exclusive classes, the calculations and network configurations are not as simple.

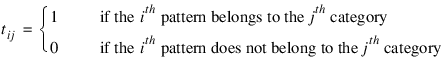

One approach is to use multiple network outputs, one for each of the

C classes. Using this approach, the

jth output for the

ith training pattern,

tij, is the estimated probability that the

ith pattern belongs to the

jth class, denoted by

. An easy way to estimate these probabilities is to use logistic activation for each output. This ensures that each output satisfies the univariate probability requirements, i.e.,

.

However, since the classification categories are mutually exclusive, each pattern can only be assigned to one of the C classes, which means that the sum of these individual probabilities should always equal 1. Yet, if each output is the estimated probability for that class, it is very unlikely that:

In fact, the sum of the individual probability estimates can easily exceed 1 if logistic activation is applied to every output.

Support Vector Machine (SVM) neural networks use this approach with one modification. An SVM network classifies a pattern as belonging to the

ith category if the activation calculation for that category exceeds a threshold and the other calculations do not exceed this value. That is, the

ith pattern is assigned to the

jth category if and only if

and

for all

k ≠ j , where

δ is the threshold. If this does not occur, then the pattern is marked as

unclassified.

Another approach to multiclass classification problems is to use the softmax activation function developed by Bridle (1990) on the network outputs. This approach produces outputs that conform to the requirements of a multinomial distribution. That is:

for all

for all i

= 1, 2, ..., N

and  for all

for all i

= 1, 2, ..., N

and:

j = 1, 2, ..., C

The softmax activation function estimates classification probabilities using the following softmax activation function:

where Zij is the potential for the jth output perceptron, or category, using the ith pattern.

For this activation function, it is clear that:

for all

for all i

= 1, 2, ..., N, j

= 1, 2, ..., C

and  for all

for all i

= 1, 2, ..., N

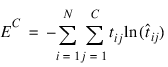

Modeling the C network outputs as multinomial observations leads to the cross-entropy error function described by Hopfield (1987) and Bishop (1995):

where

N is the number of training patterns,

tij is the target value for the

jth class of

ith pattern (either 1 or 0), and

is the network’s

jth output for the

ith pattern

. is equal to the neural network’s estimate of the probability that the

ith pattern should be classified into the

jth category.

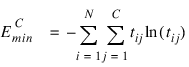

For situations in which the target variable is a probability in the range 0 < tij < 1, the value of the cross-entropy at the networks optimum is equal to:

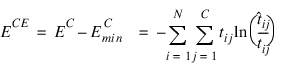

Subtracting this from EC gives an error term bounded below by zero, i.e., ECE ≥ 0 where:

This adjusted cross-entropy is normally reported when training a binary classification network where 0 < tij < 1. Otherwise EC , the non-adjusted cross-entropy error, is used. That is, when 1-in-C encoding of the target variable is used:

Small values, values near zero, indicate that the training resulted in a network with a low error rate and that patterns are being classified correctly most of the time.

Back-Propagation in Multilayer Feedforward Neural Networks

Sometimes a multilayer feedforward neural network is referred to incorrectly as a back-propagation network. The term back-propagation does not refer to the structure or architecture of a network. Back-propagation refers to the method used during network training. More specifically, back-propagation refers to a simple method for calculating the gradient of the network, that is the first derivative of the weights in the network.

The primary objective of network training is to estimate an appropriate set of network weights based upon a training dataset. Many ways have been researched for estimating these weights, but they all involve minimizing some error function. In forecasting the most commonly used error function is the sum-of-squared errors:

Training uses one of several possible optimization methods to minimize this error term. Some of the more common are: steepest descent, quasi-Newton, conjugant gradient and many various modifications of these optimization routines.

Back-propagation is a method for calculating the first derivative, or gradient, of the error function required by some optimization methods. It is certainly not the only method for estimating the gradient. However, it is the most efficient. In fact, some will argue that the development of this method by Werbos (1974), Parker (1985) and Rumelhart, Hinton and Williams (1986) contributed to the popularity of neural network methods by significantly reducing the network training time and making it possible to train networks consisting of a large number of inputs and perceptrons.

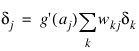

Simply stated, back-propagation is a method for calculating the first derivative of the error function with respect to each network weight. Bishop (1995) derives and describes these calculations for the two most common forecasting error functions—the sum-of-squared errors and Laplacian error functions. Abe (2001) gives the description for the classification error function - the cross-entropy error function. For all of these error functions, the basic formula for the first derivative of the network weight wji at the ith perceptron applied to the output from the jth perceptron is:

where

Zi =

g(

ai) is the output from the

ith perceptron after activation, and

is the derivative for a single output and a single training pattern. The overall estimate of the first derivative of

wji is obtained by summing this calculation over all

N training patterns and

C network outputs.

The term back-propagation gets its name from the way the term δj in the back-propagation formula is calculated:

where the summation is over all perceptrons that use the activation from the jth perceptron, g(aj).

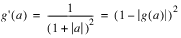

The derivative of the activation functions,

g'(

aj), varies among these functions. See

Activation Functions and Their Derivatives:

Version 2017.0

Copyright © 2017, Rogue Wave Software, Inc. All Rights Reserved.

, where μ is a bias correction

, where μ is a bias correction

is the network’s forecast for that case.

is the network’s forecast for that case.

is the average output:

is the average output:

is the network output for the ith training pattern. This is equal to the neural network’s estimate of the probability that the ith training pattern should be classified as “yes.”

is the network output for the ith training pattern. This is equal to the neural network’s estimate of the probability that the ith training pattern should be classified as “yes.”

from EC gives an error term bounded below by zero, i.e.:

from EC gives an error term bounded below by zero, i.e.:

. An easy way to estimate these probabilities is to use logistic activation for each output. This ensures that each output satisfies the univariate probability requirements, i.e.,

. An easy way to estimate these probabilities is to use logistic activation for each output. This ensures that each output satisfies the univariate probability requirements, i.e.,  .

.

and

and  for all k ≠ j , where δ is the threshold. If this does not occur, then the pattern is marked as unclassified.

for all k ≠ j , where δ is the threshold. If this does not occur, then the pattern is marked as unclassified.  for all i = 1, 2, ..., N and

for all i = 1, 2, ..., N and  for all i = 1, 2, ..., N

for all i = 1, 2, ..., N

for all i = 1, 2, ..., N, j = 1, 2, ..., C and

for all i = 1, 2, ..., N, j = 1, 2, ..., C and  for all i = 1, 2, ..., N

for all i = 1, 2, ..., N

is the network’s jth output for the ith pattern

is the network’s jth output for the ith pattern  . is equal to the neural network’s estimate of the probability that the ith pattern should be classified into the jth category.

. is equal to the neural network’s estimate of the probability that the ith pattern should be classified into the jth category.

is the derivative for a single output and a single training pattern. The overall estimate of the first derivative of wji is obtained by summing this calculation over all N training patterns and C network outputs.

is the derivative for a single output and a single training pattern. The overall estimate of the first derivative of wji is obtained by summing this calculation over all N training patterns and C network outputs.